By Ashok Dutta | Published with permission: New Technology Magazine | October 2011

Risks and rewards are evenly poised in the hydrocarbons industry, and along with oil and gas companies, probably no one understands the nuances better than Tom Smith, founder and president of Geophysical Insights.

In its first stage, the Houston-based software technology firm has developed a new technology based on unsupervised neural networks (UNN). Smith and his team are pioneering the application of UNN to the interpretation of 3-D seismic response data. Currently going to market as a service, the UNN technology has been applied successfully by medium-to-large capital energy companies.

And looking ahead, Geophysical Insights plans to launch a full-scale commercial software product suite in 2012, which will be scalable to fit the needs of small independents to oil and gas majors.

“In the E&P [exploration and production] industry, we have to analyze data in large volumes and it comes in different formats,” says Smith. “Today, seismic interpretation involves six to 100 attributes of data, each of which constitute a 3-D data. [For a 3-D seismic survey] three kinds of images emerge along with subtle combinations of data in higher dimensionality. The key to success lies in categorization and interpretation of all attributes simultaneously. That is the initial problem we are addressing using UNN technology.”

“For any seismic survey, there may be many seismic attribute volumes. Our initial step is to use principal component analysis to focus on the seismic attributes which are relevant for the study,” says Felix Balderas, information and system architect consultant with Geophysical Insights. “Then we process the selected volumes through the UNN. It’s effectively a quantization technique that reduces multi-dimensional data, which can be incomprehensible, into something that is understandable by humans. The process creates a new volume of data and identifies specific areas of interest within the survey. We call the resulting areas of interest ‘anomalies.’ UNN’s ability to identify anomalies results in time savings by focusing the interpreter’s attention on potential plays. It reduces risk for oil and gas companies if they avoid areas where there are no anomalies.”

The results are too obvious to ignore for any seismic data interpreter. With fossil fuels being a finite resource, time and money are of the essence for energy firms as they come under increasing pressure to make new discoveries. Be it Organization of Petroleum Exporting Countries (OPEC) or non-OPEC member states, with oil prices remaining north of US$80 per barrel, a buzzword in the industry is scouting and sniffing for new reserves.

Undoubtedly for them, a major challenge they have to overcome is an insightful interpretation of seismic data. “This is an issue of yesterday, today and tomorrow. But with UNN there are certain advantages as the technology is focused on isolating the anomalies in any survey and presenting a clearer picture,” says Smith.

According to him, the neural network becomes, in essence, a “learning machine” adapting to the characteristics of the data and resulting in what is called self-organizing maps. The input data are unclassified and the learning process is unattended. That is, no form of well log or reference data is required to calibrate the UNN at specific geographic locations.

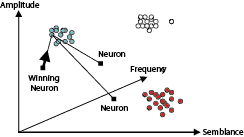

“We introduce neurons in random coordinates and they are ‘attracted’ to data points via a mathematical methodology. The neurons will go through a learning process and then assign to each data point a winning neuron,” Smith says. “In the ‘learning’ stage, neurons are attracted to data samples in the clusters in a recursive process. Ultimately, after neuron movement has finished, the neurons reveal subtle combinations of attributes that may highlight the presence and type of hydrocarbons.”

Upon completion of the learning process, the neurons will identify anomalies in the data—materials that are different than their surroundings because of the variations in the seismic attributes at those locations. This supports and facilitates the exploration process by identifying the shapes and sizes of anomalies, which then suggest locations deserving additional study.

Geophysical Insights applies unsupervised neural networks to the interpretation of 3-D seismic data. The new volume of data created by the process identifies specific areas of interest within a survey. A neuron “learns” by adjusting its position within the attribute space as it is drawn toward nearby data points. The winning neuron is the one that is closest to the selected data point.

The company’s current pricing model is based on the size of the appraisal area. It is currently analyzing acreages ranging from 50 to 800 square miles, both onshore and offshore.

“The technology is now being offered as a service and we are gearing to launch a suite of software products next year,” says Hal Green, the acting director of business development. “The software pricing model is being worked out and will be available with the introduction of the product suite in 2012.”

Green elaborated on the “service” offer for oil and gas companies, stating that a prospective client typically selects a geographical appraisal area that is captured in a single SEG-Y file (a file format developed by the Society of Exploration Geophysicists).

“Without an initial investment on the part of the client, we perform an analysis on that entire region/file based on an initial set of 13 attributes using our technology. At that point, we request the client to identify a sample area representing not more than 10 per cent of the whole appraisal area,” he says.

As a next step, Geophysical Insights then presents the detailed results/findings of the UNN analysis on the selected sample area and a summary of the findings across the whole appraisal area. If the client finds those results promising, they enter into a mutually acceptable technical and commercial relationship that provides the oil and gas company the complete details of the analysis across the entire appraisal area, including supporting conclusions in written form from Smith.

Irrespective of what the price may be, Smith is sanguine about a growing future demand of the product. A growing shortage of geoscientists in the industry— primarily due to retirements— implies oil and gas companies will rely on the incremental use of technology to interpret seismic data and better understand the geology.

“We see the use of UNN not just in seismic surveys, but also in downhole measurements, monitoring production processes and more,” he says.

PPDM

Despite its initial success, Geophysical Insights is not resting on its laurels and is pursuing efforts to scale new heights in game-changing, “disruptive” technologies.

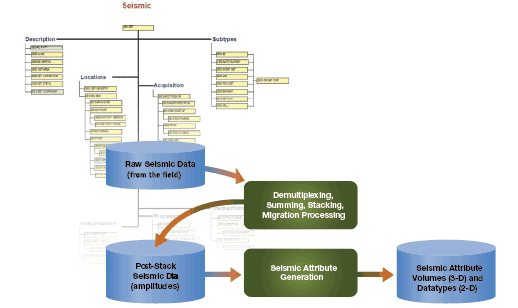

In a white paper presented early this year at an industry conference in Houston, Ken Cooley, a consultant to Geophysical Insights, highlighted the benefits of plans underway to implement their seismic data storage in the Professional Petroleum Data Management (PPDM) Association model.

Calgary-based PPDM is a global, not-for-profit standards organization that works collaboratively with industry to create and publish data management standards for the petroleum industry. Cooley’s paper described a pattern of “populating” seismic survey meta-data to unambiguously store and access the data in a PPDM database through standard methods.

“PPDM has a dedicated model that stores data, with post stack being already stored in large binary files,” he says. “We use this model to store post-stack meta-data and keep the binary files separately on disk. Currently, 130 different tables are being used and the number is growing. In the future, we can extend PPDM to support new data.”

The verdict will be much awaited, but in the meanwhile Smith has high expectations from UNN.

REDUCING RISK

Geophysical Insights’ seismic interpretation technology’s success lies in categorization and interpretation of all attributes of data—of which there may be six to 100— simultaneously. The company plans to implement its seismic data storage in the Professional Petroleum Data Management (PPDM) Association model. It has outlined a pattern of “populating” seismic survey meta-data to unambiguously store and access the data in a PPDM database through standard methods.

NEURAL NETWORK

Geophysical Insights applies unsupervised neural networks to the interpretation of 3-D seismic data. The new volume of data created by the process identifies specific areas of interest within a survey. A neuron “learns” by adjusting its position within the attribute space as it is drawn toward nearby data points. The winning neuron is the one that is closest to the selected data point.